How to use AI and Automation for Ethical Hacking and Vulnerability Assessment

Introduction

Automation is the holy grail for most industries. Think about it. The printing press was automated writing. The robotic assembly line is the automated creation of products. Code is the ultimate automation. It's automation of computation. But there are still unsolved automation problems. Perfect automated stock trading would be free money. Automated writing could fill endless books. Automated coding could dramatically increase the world's GDP.

What if I told you that many of the untapped industries for automation were finally feasible with the advent of best-in-class Large Language Models (LLMs)? They are. The degree of impact and coverage for what's possible is still up for debate, but Paul Graham said that more than half the founders in the current Y Combinator batch are AI startups.

A prominent and important field lacking any end-to-end automation is cybersecurity. Some vulnerability assessment tools are automated. Notably tools such as Nessus and Nuclei. Nuclei can certainly find some vulnerabilities without hand-holding. That said, they still pale in comparison to human hackers.

The Age of Cybersecurity Automation

Cybersecurity automation is going to grow quickly over the next few years. It's always been an industry where automation helps humans. The harder problems have been beyond the grasp of code-only solutions. That is, until now. While we'll likely never see 100% automation, LLMs are a key piece of tech which can now enable much more than code alone.

For blue teams, imagine having infinite tier one analysts that can summarize, triage, and slap an approximate risk score on every alert. Imagine having infinite cybersecurity table-top exercise ideas. Imagine a tool that can look at an organization as a whole and point out the highest risks. The AI-powered tools to solve these problems are in-the-making.

For the offensive side of the house, expect similar outcomes. Manual vulnerability assessments often face a limitation of scale, which is one reason bug bounty has been so successful. Imagine if we could unleash 10,000 AI-agent hackers on an organization.

The Role of AI in Ethical Hacking

A proper implementation of AI in ethical hacking could lead to substantial improvements in vulnerability detection. AI's strengths, such as pattern recognition, coding, and deep understanding of existing concepts, are core competencies of ethical hackers.

The vision of AI-driven cybersecurity looks promising. Imagine this: an entire network being scanned in minutes, with vulnerabilities pinpointed accurately, not just based on existing databases but through on-the-fly pattern recognition. Think of AI agents adapting to new threat patterns almost instantly, learning from every attempt, successful or failed, to infiltrate a system.

It may sound far-fetched, but the core vulnerabilities that are found across applications today are well understood. Cross-site scripting, server-side request forgery, code execution, and many others have known payloads for testing. They also usually have clear signs of compromise upon successful exploitation. The payloads and validation criteria are also well-documented in the training data that today's LLMs are trained on.

Furthermore, AI can tirelessly work. They can be attacking an application or website around the clock. Defensively, they can analyze vast amounts of network traffic, detecting anomalies that might be indicative of previously unknown attack vectors. This would give organizations an unprecedented understanding of their data and audit logging.

Spotlight on AI Hacking Tools

There are already ethical hacking AI tools being released. At Black Hat last month, a company named Vicarius showed off "vuln_GPT", a generative artificial intelligence tool that identifies and repairs software vulnerabilities. As this tech improves, the world will have security pull requests in git repos constantly available for mistakes that human (and AI) developers are making. Hacker AI is another artificial intelligence tool that scans source code to identify potential security weaknesses that may be exploited by hackers or malicious actors.

The upcoming Rust-based web proxy hacking tool, Caido, added LLM support to their tool recently which will use the current request as context for the prompt. This will help testers context switch much less, as they can reformat their requests as well as come up with creative attack ideas without ever pivoting out of Caido.

Another example is a popular modern, open source vulnerability scanner, Nuclei, recently added an AI-powered hub to help "create, debug, scan, and store templates.". This is one step towards automated exploit creation. Current models can already write basic exploits, but fine-tuning a model on nuclei templates or all the metasploit modules would make for a formidable exploit-writing tool.

Best Practices for Integrating AI Tools in Ethical Hacking

Integrating ethical hacking AI tools into existing cybersecurity operations might seem hard, but it's all about the how. Here are some steps you can follow to make it easier.

- Know your infrastructure: Every cybersecurity professional knows asset management comes first. Understand the extent and capabilities of your existing security setup.

- Identify the gaps: Determine the areas where coverage is lacking, difficult, or there's significant spending on tooling or more-importantly, man-power.

- Dream big: Have a team member or twelve keeping an eye on ethical hacking AI tools and applications that are being developed, as there will be a lot of growth in that area. Also, spend time brainstorming potential AI use-cases. Security staff can be skeptical. Many are specifically skeptical when it comes to AI. Set ground rules that it shouldn't be an AI bash-fest.

- Proof of concept: Build out a proof of concept of the best ideas to check their feasibility. Don't give up if it doesn't work at first. Invest decent time towards prompt improvement. Alternatively, consider purchasing AI-powered applications that alleviate the problems for far less than current spend.

- Push for prod: Start with a single, non-critical part of your operations, and gradually extend AI's role after reviewing its performance and tweaking the processes.

Remember, AI tools are merely tools; your team's insights and decisions will still guide them. As AI keeps getting better, cybersecurity professionals must continue learning and updating our toolkits.

Secret Weapon: The Crowd

The power of AI in cybersecurity automation and vulnerability assessments will develop faster if it is curated and influenced by the crowd. This strategy involves feeding an AI system with vast amounts of data from various sources – the collective knowledge of the "crowd".

Given the necessary resources and training, these AI systems can learn from the input, turning them into advanced engines. These engines, given proper code-wrapping and prompts, could generate detection logic, triage alerts, and potentially even manually hunt for vulnerabilities.

Ethical Considerations

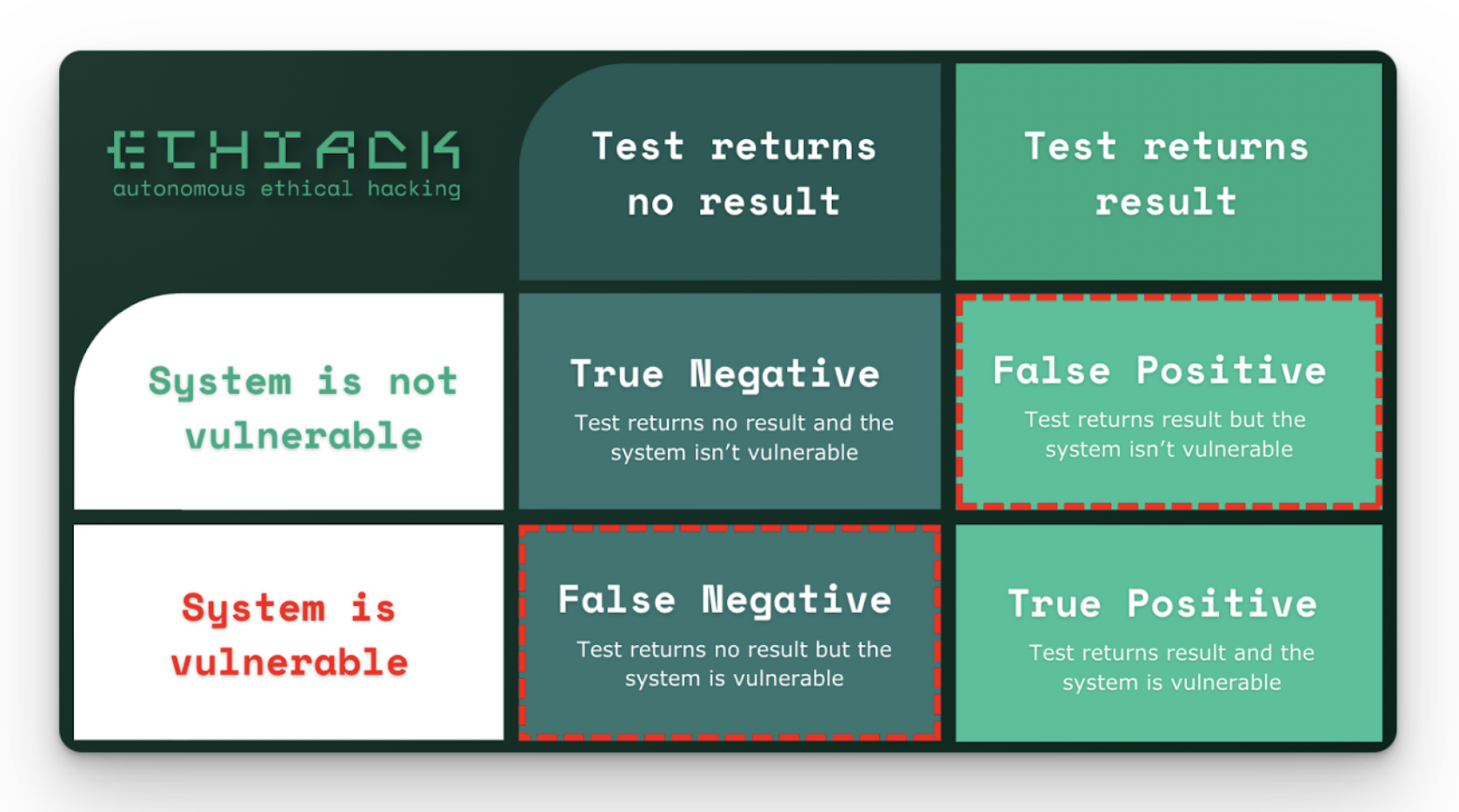

There are many interesting ethical considerations when using AI hacking tools or using AI for cybersecurity automation. One of the major issues with AI tools is the integrity of their output. In the cybersecurity space, this is generally defined as false positives or false negatives. One other major concern is whether or not it’s safe to give an AI agent access to hacking tools. Let’s discuss both of those.

What type of false positive and false negative rate do we accept from AI tooling?

Human SOC analysts miss things, and their skill varies person-to-person. Also, expecting perfection from AI is unrealistic. It's essential to strike a balance between AI efficiency and human oversight, ensuring that the combined effort maximizes threat detection while minimizing errors.

Similarly, AI hacking tools are going to miss things high quality human testers would find. However, they might find bugs that human testers missed. Incorporating AI into hacking can provide a wider perspective and be comprehensive in a way where humans might overlook things. Embracing this collaboration can lead to more robust testing.

Can we trust autonomous agents with hacking capabilities?

In Bostom's Superintelligence, he touches on how AI systems which could enhance themselves might choose to become proficient in cybersecurity. This would allow them to propagate into other systems by bypassing security controls. In general, current LLMs don't show any signs of this behavior, but as with software today getting upgrades, the systems we build today may slowly be enhanced by "plugging in" new models. If a new model were to be capable and also have a desire to propagate, having already handed it hacking abilities might turn out to be a terrible decision.

There are also concerns about the arms race in AI - as white hat hackers leverage AI to shore up defenses, black hat hackers will inevitably use AI to find novel ways to use it as well. Often the bad guys are quicker to capitalize on advantages. This might end up being the same way. It's an interesting angle to think about - although you could say this should hasten the use of AI for cybersecurity defense if anything, because the bad guys are likely to capitalize on that advantages of AI whether we do or not.

Recommended Development Guidelines

Industry standards haven't truly evolved for AI-based feature and applications, but nearly everyone would agree on a few core tenants:

AI Usage Disclosure

Companies ought to declare the use of AI in distinct app features to customers, especially when it comes to security. This way, users can make an educated decision about whether to use the feature, with an understanding of the strengths and limitations that using an AI model will bring.

Data Usage Guidelines

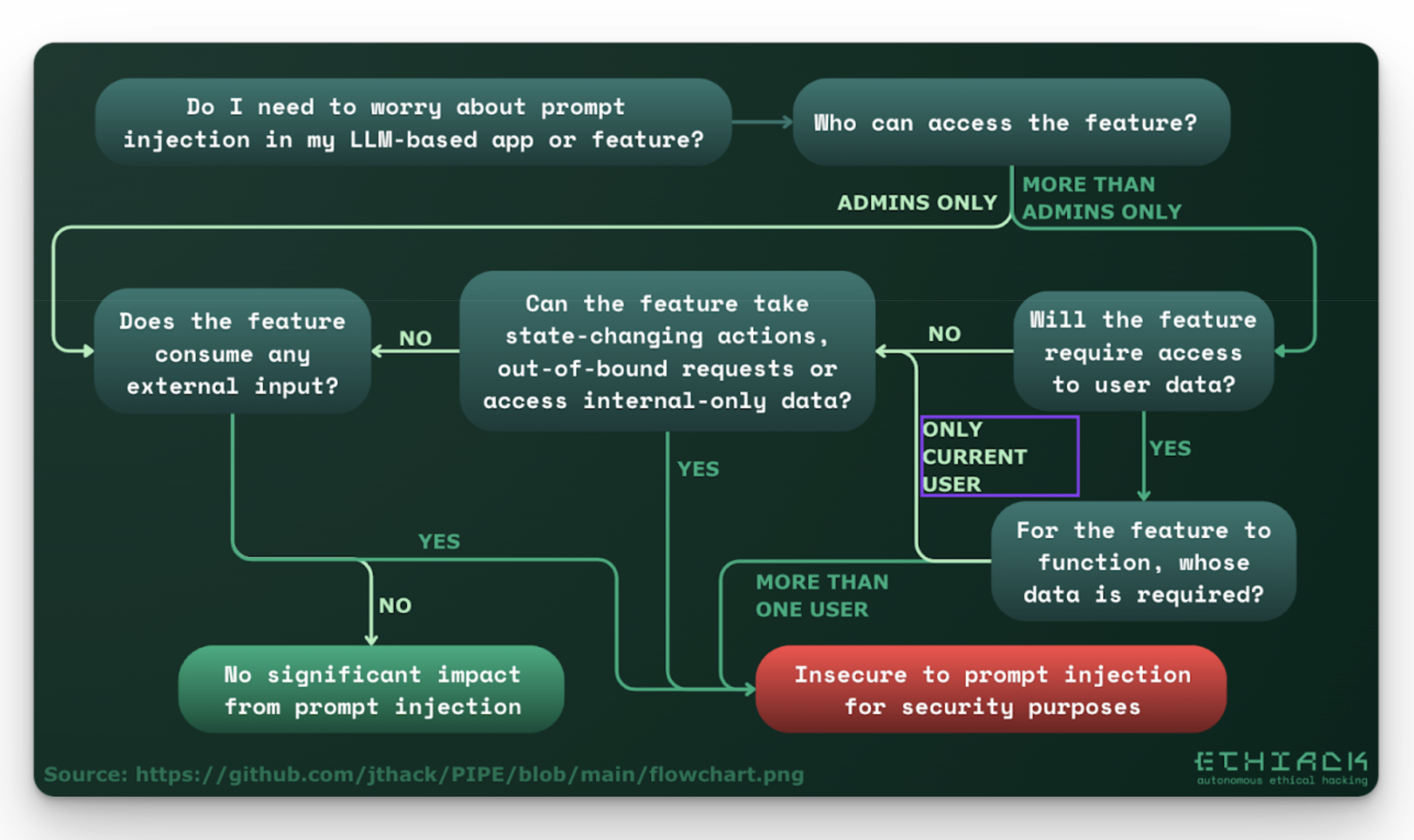

Specifically whether or not the data will end up in an LLM. This is for two main reasons. One, many companies may want control over what data is used for training or fine-tuning. Those details should be specified in the contract. Two, any input going into an LLM for processing needs to be scrutinized. If the data contains a prompt injection payload, the risks can range from deception of the system to RCE depending on the framework.

Required Security Controls

If the application uses any untrusted input as a context for prompts which take any impactful actions--or even help humans make critical decisions--then there should be some form of prompt injection protection. This single point could be turned into an article series, but suffice it to say that most products will need some form of prompt injection protection.

Conclusion and Future Outlook

With any groundbreaking technology, there will be learning curves and teething problems. The integration of AI into cybersecurity is still in its infant stage, and many challenges lie ahead. However, if history is any indication, technological progress will prevail, especially when powered by human ingenuity.

With the combined efforts of the global cybersecurity community and advances in AI, we can be cautiously optimistic about the future. The ideal scenario is one where AI-driven tools seamlessly blend with human expertise, creating a robust and agile cybersecurity infrastructure that can adapt and evolve in real-time.

One such tool is ETHIACK. They have AI hacking agents which will help secure your online presence. Try their innovative AI-assisted ethical hacking solution for free for 1 month.